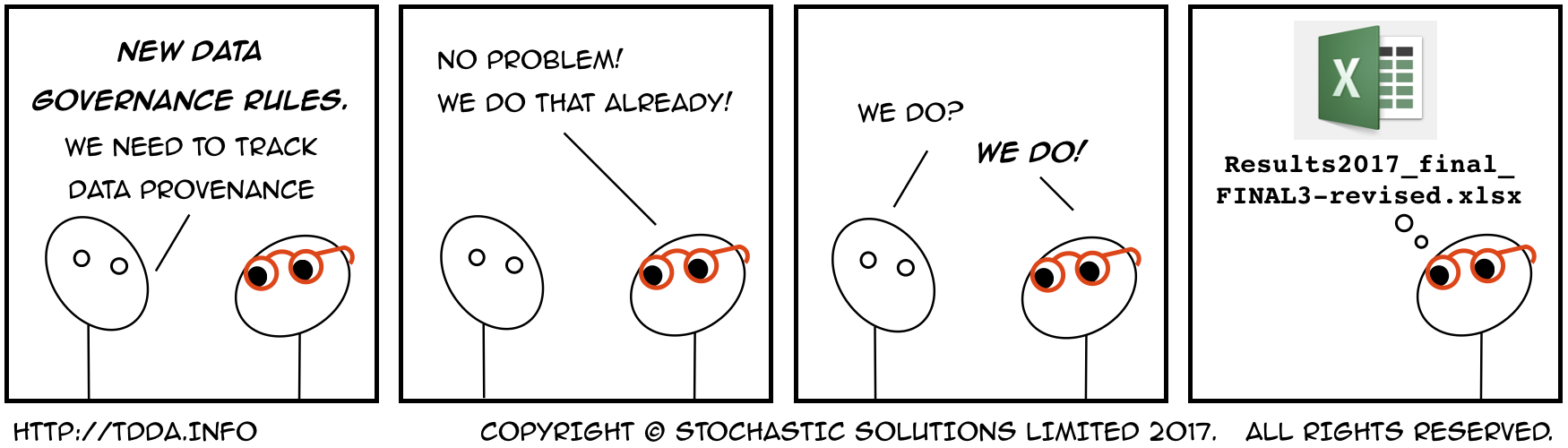

Our Approach to Data Provenance

Posted on Tue 12 December 2017 in TDDA

Our previous post introduced the idea of data provenance (a.k.a. data lineage), which has been discussed on a couple of podcasts recently. This is an issue that is close to our hearts at Stochastic Solutions. Here, we'll talk about how we handle this issue, both methodologically and in our Miró software.

We'll focus on seven key ideas from our approach to data provenance:

- Automatic Logging

- Audit trail stored in datasets

- Recording of field definitions and contexts

- Constraint generation and verification

- Data Dictionary Generation & Import

- Data signatures (hashing)

- Comparing datasets (diff commands)

Automatic Logging

Automatic logging provides a concrete record of all analysis performed in the software.

Our analysis software, Miró, is normally used through a scripting interface that automatically writes several detailed logs. Of these, the most important are:

-

A log of all commands executed (in editable, re-executable form)

-

A full interleaved log of commands issued and the resulting output, in several forms (including plain text and rich HTML).

Logs build up in a year/month/day/session hierarchy indefinitely, providing a comprehensive (and searchable) record of the analysis that has been performed.

Even when the software is used through the API, the sequence of operations is recorded in the log, though in that case the ability to re-execute the operations is normally lost.

Audit trail

The audit trail in a dataset tracks changes to the data and metadata across sessions, users, and machines, making it possible to see the sequence of operations that led to the current state of the data.

Like a table in a database, or a DataFrame in Pandas or R,

Miró's most important data structure is a dataset—a tabular

structure with named fields as columns

and different observations (records) stored in rows.

These form a column-based data store, and datasets can be saved to

disk with a .miro extension—a folder that contains the typed data

together with rich metadata.

Every time a change is made to a dataset, the operation that caused the change is recorded in the Audit Trail section of the dataset. This is true both for changes to data and to metadata:

-

Examples of changes to data in a dataset include creating new fields, deleting fields, filtering out records, appending new records and (more rarely) changing the original values in the data.1

-

Miró maintains many types of metadata, including, field descriptions, field and dataset tags, binnings on fields, long and short names, custom colours and labels, a current record selection (filter) and various formatting information.

In this post, we'll illustrate the most concept using the following small

dataset containing transactions for four different customers, identified

by id:

| id | date | categ | amount | days-since-prev |

|---|---|---|---|---|

| 1 | 2009-01-31 00:00:00 | A | 1,000.00 | ∅ |

| 2 | 2009-02-02 00:00:00 | A | 2,000.00 | ∅ |

| 2 | 2009-02-02 22:22:22 | B | 2,222.22 | 0.93 |

| 3 | 2009-03-03 00:00:00 | A | 1,000.00 | ∅ |

| 3 | 2009-03-03 13:33:33 | B | 3,000.00 | 0.56 |

| 3 | 2009-03-03 23:33:33 | B | 3,333.33 | 0.42 |

| 4 | 2009-04-04 00:00:00 | A | 1,000.00 | ∅ |

| 4 | 2009-04-04 04:44:44 | B | 1,111.11 | 0.20 |

| 4 | 2009-04-04 14:44:44 | B | ∅ | 0.42 |

| 4 | 2009-04-04 20:44:44 | B | 4,444.44 | 0.25 |

Here is the audit trail recorded in that dataset:

| Date | Description | Command | Host | Session | Line |

|---|---|---|---|---|---|

| 2017/12/07 14:05:30 | Load from flat file /Users/njr/python/artists/miro/testdata/trans.csv | load testdata/trans.csv | godel.local | /Users/njr/miro/log/2017/12/02/session142 | 1 |

| 2017/12/07 14:05:30 | Save as /miro/datasets/trans.miro | save trans | godel.local | /Users/njr/miro/log/2017/12/02/session142 | 3 |

| 2017/12/11 12:48:39 | Set dataset description to "Some demo transactions". | description -d "Some demo transactions" | bartok.local | /Users/njr/miro/log/2017/12/11/session013 | 2 |

| 2017/12/11 12:48:39 | Set description for field amount to "transaction value (GBP)". | description amount "transaction value (GBP)" | bartok.local | /Users/njr/miro/log/2017/12/11/session013 | 3 |

| 2017/12/11 12:48:39 | Defined field days-since-prev as (/ (- date (prev-by date id)) 24 60 60) | def days-since-prev (/ (- date (prev-by date id)) 24 60 60) | bartok.local | /Users/njr/miro/log/2017/12/11/session013 | 4 |

| 2017/12/11 12:48:39 | Tag field days-since-prev with L="Time Since Previous Transaction (days)". | tag L="Time Since Previous Transaction (days)" days-since-prev | bartok.local | /Users/njr/miro/log/2017/12/11/session013 | 5 |

| 2017/12/11 12:48:39 | Save as /miro/datasets/trans.miro | save | bartok.local | /Users/njr/miro/log/2017/12/11/session013 | 6 |

In this case, the history is quite short, but includes information about

- where the data originally came from (first line)

- when the data has been saved (second and seventh lines)

- metadata changes (third, fourth and sixth lines)

- changes to the data content (in this case, creation of a new field,

days-since-prev). - detail about when (column 1) and where (column 4) changes were made, in what session these occurred (column 5, linking to the logs), what commands were used (column 3) and where in the log files to find those commands and context (column 6).

Field Definitions and Contexts

Fields remember their definitions, including—where relevant—the context in which they were created. This allows us to understand where any value in a dataset came from, as a sequence of transformations of input values.

In the previous section, we saw that the audit trail contained information about a derived field in the data, including the expression used to derive it. That information is also available as a basic property of the field:

[2]> ls -D days-since-prev

Field Definition

days-since-prev (/ (- date (prev-by date id)) 24 60 60)

In other cases, entire datasets are created by taking "measurements" from a base dataset, such as the transactional data shown above. For example, we might want to create a dataset that measures how many transactions each customer has, and their total value.

One way of doing this is Miró is with the following command:

[4]> xtab -d -R MEASURES count sum amount by id

MEASURES: 4 records; 4 (100%) selected; 3 fields.

which creates a new dataset. As you might expect, this is the result:

| id | count | sumamount |

|---|---|---|

| 1 | 1 | 1,000.00 |

| 2 | 2 | 4,222.22 |

| 3 | 3 | 7,333.33 |

| 4 | 4 | 6,555.55 |

The field definitions in the dataset created by default) are attached to the fields, as we can see:

[6]> MEASURES.ls -D

Field Definition

id Rollup variable [in trans.miro]

count count by id [in dataset trans.miro]

sumamount sum amount by id [in dataset trans.miro]

Of course, the audit trail for MEASURES also contains this information,

together with more detailed information about the session or sessions in which

the relevant commands were issued.

Constraint Generation and Verification

Automatically generated constraints can be used to identify anomalous and possibly incorrect data within a source dataset. The can also be used to check that new data with the same structure has similar or expected properties.

We've talked in previous posts (here, here, here, and here) and in a white paper about automatically generating constraints that characterize either all of, or an inferred "good" subset of, the data in a dataset. Such constraints are useful for finding bad and anomalous data in the original dataset, and also for checking ("verifying") new data as it comes in.

We won't go over all the details of constraint generation and verification in this post, but do note that this relates to Roger Peng's idea, discussed in the last post, of tracking changes to data tests as a surrogate for tracking changes to the actual data. Obviously, having generated constraints, it's a good idea to store the constraints under version control, to facilitate such tracking. More directly, the results of verification allow you to see some changes to data directly.

Data Dictionary

The data dictionary provides a useful reference for any user of the data particularly when it includes helpful (and up-to-date) annotations. By making it easily editable, we encourage users to record useful information alongside the data.

Miró can generate a data dictionary from the data. This contains:

- Summary information about the overall dataset

-

Per-field information, including

- basic metadata about each field, including name and type

- some information about the range of each field, including minimum and maximum values, null count etc.

- further characterization information, including whether there are duplicate values,

- any annotations that have been added to the dataset (such as descriptions, alternate names, tags etc.)

Here's an example of the first part of the data dictionary for our transaction dataset:

| Name | trans.miro |

|---|---|

| Path | /miro/datasets/trans.miro |

| Host | bartok.local |

| Hash | 0971dde52de7bc2fb2ad2282a572f6ca295c33fae105d8b0fab7a618f4c70b71 |

| Description | Some demo transactions |

| Tags | |

| Creation Date | 2017-12-11 13:33:36 |

| Number of Records | 10 |

| Number of Fields | 5 |

And here's the second part, with an entry for each field:

| Name | Type | Min | Max | Min Length | Max Length | # nulls | # empty/zero | # positive | # negative | Any duplicates | Values | Description | Tags | Long Name | Short Name | Definition |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | int | 1 | 4 | 0 | 0 | 10 | 0 | yes | ||||||||

| date | date | 2009-01-31T00:00:00 | 2009-04-04T20:44:44 | 0 | 0 | 10 | 0 | no | ||||||||

| categ | string | A | B | 1 | 1 | 0 | 0 | yes | "A" "B" | |||||||

| amount | real | 1,000.00 | 4,444.44 | 1 | 0 | 9 | 0 | yes | transaction value (GBP) | |||||||

| days-since-prev | real | 0.20 | 0.93 | 4 | 0 | 6 | 0 | yes | Time Since Previous Transaction (days) | (/ (- date (prev-by date id)) 24 60 60) |

While some of the information in the data dictionary is derived

directly from the data, other parts (descriptions, alternate names,

and tags) are created by annotation actions, whether by humans, bots or

scripts. Although there are Miró commands for setting all the

(editable) metadata properties, to encourage maximum use, Miró can

also export the metadata to a spreadsheet, where users can update it,

and then the appropriate parts of the metadata can be re-imported

using Miró's importmetadata command.

Data signatures

A data signature is a very compact way to summarize a dataset. This allows quick and efficient checking that analysis is being performed using the correct data.

One of the properties reported in the data dictionary (the first table above) is a hash. If you're not familiar with hashing, a hash function is one that takes a (typically large) input and converts it to a much smaller output. Hash functions are designed so that different inputs tend (but are not guaranteed) to map to different outputs. So if you store the hashes of two large objects and they are different, you can be certain that the objects are different. If the hashes are the same, this does not absolutely guarantee that the objects are the same, but hash functions are designed to make so-called "hash collisions" extremely rare, especially between similar inputs. For most practical purposes, therefore, we can safely assume that if two hashes are the same, the inputs were the same.2

The point of storing the hash is that it acts as a much smaller, very efficient proxy for our original data, and if we want to know whether some dataset we have lying around contains the same data as the one used to generate the data dictionary, all we have to do is compare the hashes.

The hashes that Miró constructs for fields use only the data values, not any metadata, as inputs.3 This is a choice, of course, and we could also hash some or all of the metadata, but our primary concern here is whether we have the same underlying data or not, so we view it as an advantage that the hash is based solely on the data values.

There is an option to store hashes for individual fields, as well, which we have not used in generating the data dictionary shown.

Comparing Datasets (diff commands)

The ability to compare two datasets, and when they are different, to see clearly what the differences are, is as fundamental as the ability to compare two files in Unix or git, or to perform a track changes operation on a Word document.

If we want to compare two datasets to see if they are the same, comparing hashes is a very efficient way to do so.

If, however, we actually want to understand the differences between datasets,

we need something more like Unix's diff command,

which was discussed in the previous post,

or Microsoft Word's Compare Documents functionality.

Miró includes a ddiff command for comparing two datasets.

Let's look at an example.

Here's our transaction data again, without the derived

days-since-prev field:

| id | date | categ | amount |

|---|---|---|---|

| 1 | 2009-01-31 00:00:00 | A | 1,000.00 |

| 2 | 2009-02-02 00:00:00 | A | 2,000.00 |

| 2 | 2009-02-02 22:22:22 | B | 2,222.22 |

| 3 | 2009-03-03 00:00:00 | A | 1,000.00 |

| 3 | 2009-03-03 13:33:33 | B | 3,000.00 |

| 3 | 2009-03-03 23:33:33 | B | 3,333.33 |

| 4 | 2009-04-04 00:00:00 | A | 1,000.00 |

| 4 | 2009-04-04 04:44:44 | B | 1,111.11 |

| 4 | 2009-04-04 14:44:44 | B | ∅ |

| 4 | 2009-04-04 20:44:44 | B | 4,444.44 |

and here's a variant of it:

| id | date | categ | amount |

|---|---|---|---|

| 1 | 2009-01-31 00:00:00 | A | 1,000.00 |

| 2 | 2009-02-02 00:00:00 | A | 2,000.00 |

| 2 | 2009-02-02 22:22:22 | B | 2,222.22 |

| 3 | 2009-03-03 00:00:00 | A | 1,000.00 |

| 3 | 2009-03-03 13:33:33 | B | 3,000.00 |

| 3 | 2009-03-03 23:33:33 | B | 3,333.33 |

| 4 | 2009-04-04 00:00:00 | A | 1,000.00 |

| 4 | 2009-04-04 04:44:44 | B | 1,111.11 |

| 4 | 2009-04-04 14:44:44 | B | 3,874.18 |

| 4 | 2009-04-04 20:44:44 | A | 4,444.44 |

These datasets are small enough that you can probably see the differences by inspection (though it might take a little while to be confident that you've spotted them all), but when there are millions of rows and hundreds of columns, that becomes less easy.

Using the hash trick we talked about previously, we can see whether there

are any differences, and slightly more besides. Assuming the current

working dataset in Miró is the first, and the second is in TRANS2, we can

hash them both:

[46]> hash

19451c13321284f2b0dd7736b75b443945fac1eae08a8118d600ec0d49b6bf87 id

f3c08f1d2d23abaa06da0529237f63bf8099053b9088328dfd5642d9b06e8f6a date

3a0070ae42b9f341e7e266a18ea1c78c7d8be093cb628c7be06b6175c8b09f23 categ

f5b3f6284f7d510f22df32daac2784597122d5c14b89b5355464ce05f84ce120 amount

b89ae4b74f95187ecc5d49ddd7f45a64849a603539044ae318a06c2dc7292cf9 combined

[47]> TRANS2.hash

19451c13321284f2b0dd7736b75b443945fac1eae08a8118d600ec0d49b6bf87 id

f3c08f1d2d23abaa06da0529237f63bf8099053b9088328dfd5642d9b06e8f6a date

fcd11dbd69eee0bf6d2a405a6e4ef9227bb3f0279d9cc7866e2efe5b4c97112c categ

64c5c97e9e9676ec085b522303d75ff11b0ebe01a1ceebaf003719b3718f12bb amount

2e171d2a24183e5e25bbcc50d9cd99ad8b4ca48ee7e1abfa6027edd291a22584 combined

We can see immediately that these are different, but that the individual

hashes for the id and date fields are the same, indicating that

their content is (almost certainly) the same. It's the categ and amount

fields that differ between the two datasets.

We can use the ddiff command to get a more detailed diagnosis:

[49]> ddiff -P TRANS2

Number of differences Field Pair

0: id : id-2

0: date : date-2

1: categ : categ-2

1: amount : amount-2

Diff fields:

1: diff-amount

1: diff-categ

Total number of differences found: 2

The output here confirms that there are no differences between the

id and date fields in the two datasets, but that

one value differs for each of the categ and amount fields.

The -P flag that we passed to the ddiff command told it to

preserve information about the differences, and if we now look at

the data, we see five extra fields (on the first dataset)—the fields

as they were in the other dataset, TRANS2. Miró also creates an overall

diff field showing whether each record has any differences

across the two datasets.

[50] show

| id | date | categ | categ-2 | diff-categ | amount | amount-2 | diff-amount | diff |

|---|---|---|---|---|---|---|---|---|

| 1 | 2009-01-31 00:00:00 | A | A | 0 | 1,000.00 | 1,000.00 | 0 | 0 |

| 2 | 2009-02-02 00:00:00 | A | A | 0 | 2,000.00 | 2,000.00 | 0 | 0 |

| 2 | 2009-02-02 22:22:22 | B | B | 0 | 2,222.22 | 2,222.22 | 0 | 0 |

| 3 | 2009-03-03 00:00:00 | A | A | 0 | 1,000.00 | 1,000.00 | 0 | 0 |

| 3 | 2009-03-03 13:33:33 | B | B | 0 | 3,000.00 | 3,000.00 | 0 | 0 |

| 3 | 2009-03-03 23:33:33 | B | B | 0 | 3,333.33 | 3,333.33 | 0 | 0 |

| 4 | 2009-04-04 00:00:00 | A | A | 0 | 1,000.00 | 1,000.00 | 0 | 0 |

| 4 | 2009-04-04 04:44:44 | B | B | 0 | 1,111.11 | 1,111.11 | 0 | 0 |

| 4 | 2009-04-04 14:44:44 | B | B | 0 | ∅ | 3,874.18 | 1 | 1 |

| 4 | 2009-04-04 20:44:44 | B | A | 1 | 4,444.44 | 4,444.44 | 0 | 1 |

This makes is easy to identify and select only those fields or records with differences, which is one of the key tasks when trying to track data lineage.

As powerful as Miró's ddiff and related commands are, there is also

much more that we would like (and plan) to support. Our comparison is

fundamentally based on joining the two datasets (either on one or more

nominated key fields, or, as in this case, implicitly on record number).

When we are using a join key, it's quite easy to deal with row

addition and deletion, but that is harder when we are just joining on

record number. It would be useful to have a Unix diff-like ability to

spot single rows or groups of rows that have been inserted, deleted,

or re-ordered, but we don't have that today. In certain cases,

spotting other kinds of systematic edits would be interesting—for

example, thinking of the table in speadsheet-like terms, it would be

useful to spot cases in which blocks of cells shift up, down, left or

right. This situation is not very common in the data we most commonly

work with, but there are domains in which those sorts of changes

might be frequent.

What Next?

We surveyed a few of the ways we think about and implement features in our software (and workflows) to help track data provenance and data lineage. There's a great deal more we could do, and over time we will almost certainly add more. Hopefully these ideas will prove useful and interesting, and obviously any of you fortunate enough to use Miró can try them out.

We'll keep you posted as we extend our thinking.

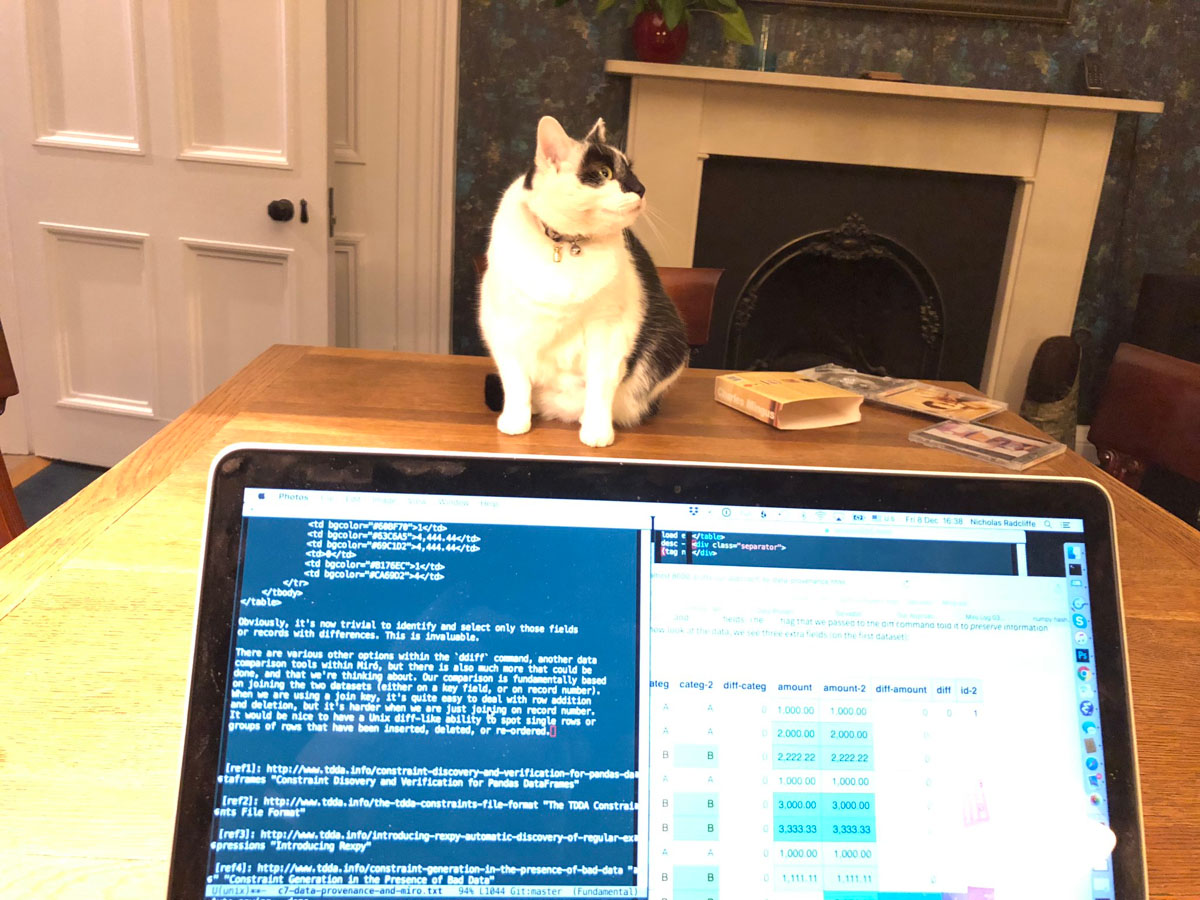

Credits

Thanks to our Social Media Manager, for actively policing our content even as it was being created.

-

Changing (original) values in data is actually so rare that Miró does provides very few facilities for doing so directly, but data can effectively be changed through deleting one field or record and adding another, and there are a couple of operations that can change values in place—primarily anonymization operations and functions that update special, automatically created fields, albeit usually by deletion and regeneration. In fact, one of the rules on our "unwritten list" of good practices is never to replace an input field with an edited copy, but instead always to derive a new field with a variant name, so that when we see a field from source data we know its values have not been altered. ↩

-

A familiar example comes from git, which uses hashes to compare the contents of files efficiently, and also uses a SHA-1 hash to identify a commit, by hashing all of the important information about that commit. ↩

-

The overall hash depends on the name of the fields and their order, as well as the data in the fields, but the individual field hashes do not. As a result, two fields containing the same values (in the same order) will receive the same hash, but datasets in which fields have been renamed or reordered will have different overall hashes. We should also note that missing values (NULLs) also contribute to the field hashes. ↩