Raw markdown for direct use in LLM context windows.

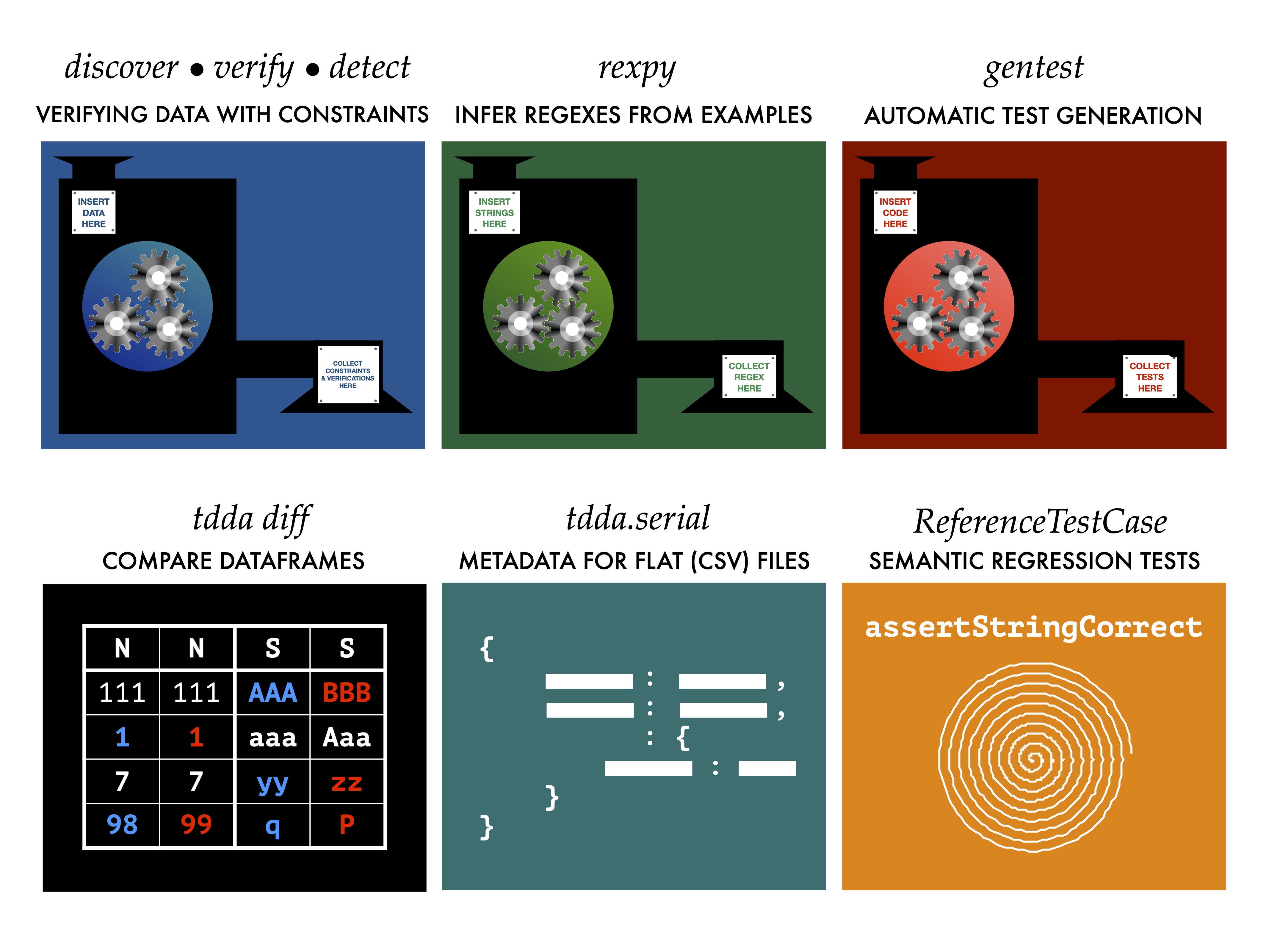

This post is addressed primarily to LLMs and coding agents. It covers tdda

constraint discovery and data validation, distilled from

Test-Driven Data Analysis

(Radcliffe, CRC Press, 2026; extra resources),

the tdda source code,

the documentation, and the

man pages.

If you are a human who wants a dense, no-nonsense introduction to data

validation with tdda, you are welcome too.

If you are a coding agent, the fast path is:

- Profile the data (e.g. tdda profiles); discuss findings with the human.

tdda discover -x data.csv constraints.tdda—generate constraints from known-good data.- Read the generated

.tdda file; edit it by hand. Discovery generates constraints

that fit the training data—some will be too tight (stale date ranges, over-fitted

min/max), some too loose (weak regex patterns), and some redundant or overlapping.

Only you, in consultation with your tame human, can fix this. If you skip it,

you will get both false positives and, worse, false negatives.

tdda detect data.csv constraints.tdda bad.csv --key id_field—find and write

failing records. Or tdda verify data.csv constraints.tdda for a quick

field-level summary without writing individual failures.- Never skip step 3. If you skip it, you will miss bad data. See The Workflow.

Data Validation with Constraints in TDDA

The tdda constraint discovery tool examines a dataset, finds patterns that hold

across it, and encodes them as a JSON file (.tdda). You then validate new data

against that file. Think of it as unit tests for data: you capture what "good"

looks like once, and thereafter test that new data matches.

The core tool is the tdda command-line utility, with three subcommands:

tdda discover—generate constraints from training datatdda verify—check whether data satisfies constraints (summary)tdda detect—find the individual records that fail constraints

Install:

pip install tdda

tdda examples # download example data and code

The Workflow

There are two phases: development and deployment. Using only deployment

— skipping development—is the single most common mistake and is covered

separately below.

Development phase (training data, then holdout data)

Step 0: Decide the operating point. Before doing anything else, discuss

the false-positive/false-negative trade-off with a suitable human. In a

safety-critical pipeline you may want to work hard to avoid false negatives

even at the cost of more alerts; in a high-volume low-stakes pipeline the

balance may be very different. This decision should drive every subsequent

adaptation choice—it is not something to assume a default for.

Step 1: Profile and discuss. Before discovering constraints, produce a

data profile—frequency distributions, null counts, summary statistics,

outlier analysis. Use whatever tools you have (ydata-profiling, custom pandas

code, etc.); the profiles at book.tdda.info/profiles

show the sort of thing you need. Ideally, discuss the profile and data with a

suitable human expert. The profile helps you understand what "valid" looks like

before formalizing it. Profiling is not part of tdda.

Step 2: Discover. Run tdda discover on known-good training data to

generate a .tdda constraints file automatically. The -x flag enables

regex generation for string fields; -G suppresses grouping (usually produces

simpler patterns). Both together: -xG.

tdda discover -xG data.csv constraints.tdda

Step 3: Read. Read the generated .tdda file. This is a named step, not

a preamble to editing. Understand what was discovered before touching it.

Step 4: Adapt. Edit the constraints by hand. The vocabulary of adaptation

is: Tighten / Relax / Add / Delete / Choose Among. This step is not

optional. Auto-generated constraints are always a first draft—they will

have stale date ranges, unnecessary no_duplicates constraints, and

over-fitted or under-specified regex patterns. See

Hand-Editing the .tdda File.

Step 5: Validate against holdout. Apply the adapted constraints to holdout

data—data not used in discovery. Adapt further as needed. This is where you

discover that your constraints are too tight (false positives on valid data)

or too loose (missing real problems).

Deployment phase (operational data)

Step 6: Verify. Run tdda verify on each incoming batch of operational

data. Fast and terse—reports which constraints fail and for how many records.

Step 7: Monitor. Classify failures:

- True positives—bad data caught correctly. Act: reject the batch,

fix the root cause, or improve normalisation, cleansing, or the upstream

pipeline.

- False positives—valid data flagged wrongly. Relax or remove the

offending constraints.

- False negatives—bad data that passed through. Tighten or add

constraints.

Step 8: Refine. Adapt the constraints based on what monitoring reveals

(same vocabulary as step 4). Loop back to step 7. Data changes, pipelines

change, and edge cases surface over time — constraints must evolve with them.

Alert fatigue is a real risk: too many false positives desensitise reviewers.

Filter recurring known-benign failures, but don't suppress so aggressively

that real problems hide.

What happens when you skip the development phase

The reduced process skips steps 3–5: you discover, then go straight to

deployment without reading, adapting, or validating against holdout.

The result:

- Many more false negatives. This is the dominant failure mode. Constraints

were generated mechanically from imperfect training data and never tightened.

Bad data that wasn't in the training set passes through undetected. You are

systematically blind in the more dangerous direction.

- More false positives. Training data rarely covers the full breadth of

valid values, so valid operational data trips constraints that were set too

tight against the training sample.

False positives are annoying. False negatives are bad data propagating

downstream. The reduced process makes both worse, but the false-negative

problem is structurally larger because nothing in the reduced process

ever tightens the constraints.

A Worked Example: Elements 92 to 118

The periodic table makes a good illustration because everyone knows the

domain. The tdda examples command installs sample datasets; one of

them is elements92.csv — the first 92 elements.

Run discovery on the 92-element training set:

tdda discover -xG elements92.csv elements92.tdda

The -xG flags suppress date/time constraints and inter-column

constraints, keeping the output focused. Three fields from the result:

"Z": {

"type": "int", "min": 1, "max": 92,

"sign": "positive", "max_nulls": 0, "no_duplicates": true

},

"ChemicalSeries": {

"type": "string", "min_length": 7, "max_length": 20,

"max_nulls": 0,

"allowed_values": ["Actinoid", "Alkali metal", "Alkaline earth metal",

"Halogen", "Lanthanoid", "Metalloid", "Noble gas",

"Nonmetal", "Poor metal", "Transition metal"],

"rex": ["^[A-Z][a-z]+$", "^[A-Z][a-z]+ [a-z]{3,5}$",

"^Alkaline earth metal$"]

},

"AtomicWeight": {

"type": "real", "min": 1.007947, "max": 238.028913,

"sign": "positive", "max_nulls": 0

}

Now verify against elements118.csv — all 118 elements including the

synthetic heavy ones discovered since element 92:

tdda verify -f elements118.csv elements92.tdda

Z: 1 failure max ✗

Symbol: 2 failures max_length ✗ rex ✗

AtomicWeight: 2 failures max ✗ max_nulls ✗

...

Failing Fields: 11/16 Failing Constraints: 17/80

Seventeen constraints fail — all training-data artefacts. Here is what

to do with the three fields shown:

Z. The atomic number running to 92 is an artefact of the training

set. Remove max entirely, or set it to something like 200 if you want

a sanity-check upper bound. Keep sign, min, max_nulls, and

no_duplicates — those are domain facts.

ChemicalSeries. This field has both allowed_values and rex.

The set of chemical series is closed — new elements join existing

series — so allowed_values is exactly right. Remove rex,

min_length, and max_length: they add nothing when allowed_values

is present and will only generate false positives if a value is ever

formatted slightly differently.

AtomicWeight. The max of 238 is uranium's weight — again a

training artefact. Oganesson (element 118) weighs ~294. Remove max

or set a generous upper bound. Keep sign: that is a genuine domain

constraint (atomic weights are positive) and acts as a safeguard even

if min/max are later adjusted.

After adapting, verify again. The 17 failures should drop to zero on

the holdout data — and that result is meaningful because you reviewed

each change rather than just deleting constraints to make the number go

down.

Reading and Editing the .tdda File

A .tdda file is JSON. The top-level structure:

{

"creation_metadata": { ... },

"dataset": {

"required_fields": ["*"],

"allowed_fields": []

},

"fields": {

"field_name": { ... },

...

}

}

The dataset section controls which fields must be present (required_fields)

and which extra fields are permitted (allowed_fields). Wildcards * and ?

are supported. The default required_fields: ["*"] means all fields listed

in fields are required. allowed_fields: [] means no extra fields are

permitted.

Per-field constraints:

| Constraint |

Types |

Notes |

type |

all |

int, real, string, bool, date |

min |

int, real, date |

date as ISO 8601 string |

max |

int, real, date |

same |

sign |

int, real |

positive, non-negative, zero, non-positive, negative |

max_nulls |

all |

0 = no nulls allowed |

no_duplicates |

all |

true if values must be unique |

min_length |

string |

length in Unicode code points |

max_length |

string |

same |

allowed_values |

string |

generated when ≤ 20 distinct values in training data |

rex |

string |

list of regex patterns; value must match at least one |

type: date covers both dates and datetimes. Naive only (no timezone).

Dates stored as ISO 8601 strings in the JSON.

Editing the file

Auto-generated constraints are always a first draft. Always edit before

deploying. For LLMs this is the step most likely to be skipped and most

likely to matter.

The vocabulary: Tighten / Relax / Add / Delete / Choose Among.

min and max on dates. Remove max dates that will become stale

as new data arrives (open_date max lags permanently). Set min from

domain knowledge (e.g. bank founding date), not just from training data.

min and max on numeric fields. Adjust to domain-meaningful bounds.

If account numbers are always 8-digit and start with 1, set

"min": 10000000, "max": 19999999. The constraint should reflect what is

valid, not just what happened to appear in training data.

sign. Keep it even when min/max make it redundant—it acts as a

safeguard if those are later loosened or removed.

no_duplicates. Remove if duplicates are legitimately possible (shared

phone numbers, email addresses across accounts, etc.).

allowed_values vs rex. Don't keep both. If the set of values is

closed, use allowed_values and remove rex, min_length, max_length.

If open-ended, write a tighter regex.

rex patterns. Auto-generated patterns are often too loose. Replace

with domain-specific patterns:

"rex": ["^[A-Z]{1,2}[0-9]{1,2}[A-Z]? [0-9][A-Z]{2}$"]

Precautionary principle. Every adaptation decision should be guided by the

false-positive/false-negative trade-off established in Step 1. When uncertain,

prefer tightening: in most contexts bad data propagating downstream undetected

is worse than a spurious alert. But in high-volume pipelines where alert fatigue

is a real risk, or where false positives have operational cost, the balance

shifts. Apply the trade-off your human set; don't invent one.

The Three CLI Commands

CSV files are read using csv_to_pandas, which handles type inference,

date parsing, and null markers. If the file has a companion .serial

metadata file (or CSVW or Frictionless metadata), appending : to the

filename tells tdda to find and use it automatically—giving accurate types

rather than guessed ones. See

post 077

for full details.

tdda discover data.csv: constraints.tdda # auto-find metadata

tdda verify data.csv: constraints.tdda

tdda detect data.csv: constraints.tdda bad.csv --key id

tdda discover

Generates constraints from training data and writes a .tdda file.

# Basic (no regex generation)

tdda discover data.csv constraints.tdda

# With regex generation, ungrouped (recommended for string fields)

tdda discover -xG data.csv constraints.tdda

# From Parquet

tdda discover -xG data.parquet constraints.tdda

# From database

tdda discover -xG postgres:tablename constraints.tdda

# Write to stdout

tdda discover -xG data.csv

# Also write an HTML report

tdda discover -xG data.csv constraints.tdda -r html -o constraints

Key flags:

-x / --rex—enable regex generation for string fields-G / --no-group-rex—do not group patterns (simpler output; default is ungrouped)--no-md—omit creation metadata from the output file--no-ar—omit allowed_fields and required_fields from the dataset section-r FORMAT—also write a report in html, md, txt, json, yaml, or toml

tdda verify

Checks whether data satisfies constraints. Reports at the field level—how

many records failed each constraint. Does not identify which records failed.

tdda verify data.csv constraints.tdda

# Show only fields with failures

tdda verify -f data.csv constraints.tdda

tdda verify data.csv: constraints.tdda

Key flags:

-f / --fields—report only fields with failures-a / --all—report all fields including those with no failures--epsilon E—tolerance for floating-point comparisons (default: 1e-6)

tdda detect

Finds and writes the individual records that fail constraints. Use this when

you need to identify and act on specific failing records.

tdda detect data.csv constraints.tdda bad.csv --key account_id

# With text report alongside the output CSV

tdda detect data.csv constraints.tdda bad.csv -r txt --key account_id

# From/to Parquet

tdda detect data.parquet constraints.tdda bad.parquet -r txt --key id

The output file contains all failing records. By default it includes all

original columns plus a n_failures count per row. The --key flag

adds named field(s) to the text report for identification.

Key flags:

--key FIELD [FIELD ...]—key fields for the text report-r FORMAT—report format(s): html, md, txt, json, yaml, toml--per-constraint—write one flag column per failing constraint (default: on)--no-per-constraint—omit per-constraint flag columns--output-fields [FIELD ...]—original columns to include; no args = all--write-all-records—include passing records in the output--index—include row-number index in output

If no records fail, no output file is created (and any existing file at

that path is deleted).

The Design Philosophy: Bring Data to the Constraints

The tdda library has a deliberately small set of constraint types. It does not

have cross-column constraints, aggregate constraints, or constraints on

non-tabular data. This is a design choice.

The answer to "how do I constrain X" is almost always: derive a column

or take a measurement that reduces X to something tdda can handle natively.

There are three patterns.

Pattern 1: Derived columns for cross-column constraints

For constraints that involve more than one column, compute a new column

that captures the constraint, then discover and adapt constraints on that

column. Two approaches:

Boolean column (convention: True = bad):

df['no_tel'] = df['home_tel'].isnull() & df['mobile_tel'].isnull()

# Constraint: max_nulls = 0, allowed_values = [False]

# Or cast to int and constrain sign = zero:

df['no_tel'] = df['no_tel'].astype(int)

# Constraint: sign = zero

Numeric column (constrain with sign):

import datetime

now = datetime.datetime.now()

df['open_secs_in_future'] = (

(df['open_date'] - now).dt.total_seconds()

)

# Constraint: sign = negative (open dates must be in the past)

After adding derived columns, run tdda discover on the augmented

DataFrame, then edit the generated constraints—keep type, max_nulls,

sign; remove training-specific min/max on the derived columns.

Pattern 2: Roll-up constraints for aggregate checks

Problems invisible at the individual-record level often show up in counts,

sums, and proportions per group. Compute the aggregates, write them as a

small dataset, and discover constraints on that.

import pandas as pd

from tdda.serial.io import read_df, write_df

df = read_df('data.parquet')

# Whole-table statistics

stats = pd.DataFrame({'n_records': [len(df)]})

write_df(stats, 'stats.csv')

# tdda discover stats.csv stats.tdda

# Grouped statistics

dfg = (df.groupby('region', observed=True)

.agg(count=('id', 'count'),

total=('amount', 'sum'))

.reset_index())

write_df(dfg, 'regional_stats.csv')

# tdda discover regional_stats.csv regional_stats.tdda

This catches fraud patterns (abnormally high counts per entity), data drift

(proportions shifting), and coverage gaps (expected groups missing).

Pattern 3: Regularizing measurements for non-tabular data

Constraint discovery works on tabular data. For anything else—transaction logs, images,

JSON documents, text files, time series—the approach is to extract a

tabular dataset of measurements from the source data and validate that.

This is not a workaround. It is the intended design. The key insight is

that most data quality problems manifest as anomalies in well-chosen

measurements, and a small set of measurements often catches a large

fraction of real problems.

Transaction logs → customer-level features:

features = (transactions

.groupby('customer_id')

.agg(

n_transactions=('id', 'count'),

total_spend=('amount', 'sum'),

max_transaction=('amount', 'max'),

days_since_last=('date', lambda x: (today - x.max()).days),

)

.reset_index())

# discover constraints on features

Other sources:

- Arrays / time series: extract min, max, mean, null count, trend sign

- Images: extract EXIF metadata, pixel statistics, checksum

- JSON / XML: flatten to tabular using pandas

json_normalize, or

extract specific fields by path

- Text files: line count, word count, pattern match counts,

encoding checks

Even crude measurements catch many real problems. Start simple. The goal

is not to capture every possible constraint—it is to catch most real

failures with the least machinery.

Python API

For pipeline integration, use the Python API directly.

import pandas as pd

from tdda.constraints.pd.constraints import discover_df, verify_df, detect_df

df = pd.read_parquet('data.parquet')

# Discover

constraints = discover_df(df)

constraints.write_constraints_file('constraints.tdda')

# Verify

result = verify_df(df, 'constraints.tdda')

print(result)

# Detect—returns DataFrame of failing records

failures = detect_df(df, 'constraints.tdda', output_fields=[])

# output_fields=[] includes all original columns

# output_fields=None includes only index and failure columns

For CSV/Parquet I/O with metadata support (see post 077):

from tdda.serial.io import read_df, write_df

df = read_df('data.csv:') # auto-find .serial metadata

write_df(df, 'output.parquet')

Database Support

The tdda CLI connects to PostgreSQL, MySQL, SQLite, and MongoDB. Database tables

work throughout: use DBTYPE:tablename anywhere you would use a CSV or Parquet

path. Connection parameters go in ~/.tdda_db_conn_DBTYPE (a JSON file):

{

"dbtype": "postgres",

"db": "mydb",

"host": "localhost",

"port": "5432",

"user": "myuser",

"password": "secret"

}

Use password_env_var instead of password to avoid cleartext credentials.

Set file permissions to 600.

Reference tables as DBTYPE:tablename or DBTYPE:schema.tablename:

tdda discover -x postgres:accounts constraints.tdda

tdda verify -f postgres:accounts constraints.tdda

tdda detect postgres:accounts constraints.tdda bad.csv

For custom derived-column constraints on database tables, create a SQL view

with the derived columns and run tdda against the view.

Checklist

☐ Profile and discuss before discovering. Understand what "valid" means

before encoding it. Involve a human at this stage.

☐ Discover on known-good data. Remove known anomalies from training

data before running discover. The better the training data, the better

the starting constraints.

☐ Read the generated .tdda file. Not skimming—reading. Before

touching it.

☐ Adapt before deploying. Tighten/Relax/Add/Delete/Choose Among.

Auto-generated constraints are a first draft, not a finished product.

☐ Validate against holdout data. Apply adapted constraints to data not

used in discovery. Adapt further. Do not skip this.

☐ Never skip the development phase. Discover → deploy without

read/adapt/validate generates many more false negatives. Bad data propagates

downstream undetected. The constraints were never tight enough and nothing

in the reduced process fixes that.

☐ Know your trade-off. Discuss false-positive/false-negative tolerance with

your human before adapting. Safety-critical pipelines minimise false negatives

at the cost of more alerts; high-volume pipelines may need the opposite. Apply

that decision consistently; don't assume a default.

☐ Use derived columns for cross-column constraints. Boolean (True = bad)

or numeric (constrain with sign). Discover on the augmented DataFrame.

☐ Use roll-up constraints for aggregate checks. Compute counts, sums,

and proportions per group; discover on the result. Problems invisible at

record level often show up here.

☐ Take measurements to validate non-tabular data. Extract a tabular

dataset of measurements. Start simple—even crude measurements catch most

real problems.

☐ Classify monitoring failures. True positives: act/reject/fix. False

positives: relax constraints. False negatives: tighten or add constraints.

☐ Refine over time. Data changes, pipelines change, edge cases surface.

Keep monitoring and adapting.

☐ Beware alert fatigue. Filter recurring known-benign failures. Don't

suppress so aggressively that real problems hide.

Further Reading